Misc Research

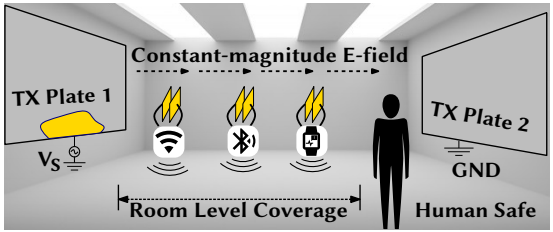

Internet-of-things (IoT) devices are becoming widely adopted, but they increasingly suffer from limited power, as power cords cannot reach the billions and batteries do not last forever. Existing systems address the issue with ultra-low-power designs and energy scavenging, which inevitably limit functionality. To unlock the full potential of ubiquitous computing and connectivity, our solution uses capacitive power transfer (CPT) to provide battery-like wireless power delivery, henceforth referred to as “Capttery”. Capttery presents the first room-level (~5 m) CPT system, which delivers continuous milliwatt-level wireless power to multiple IoT devices concurrently. Unlike conventional one-to-one CPT systems that target kilowatt power in a controlled and potentially hazardous setup, Capttery is designed to be human-safe and invariant in a practical and dynamic environment. Our evaluation shows that Capttery can power end-to-end IoT applications across a typical room, where new receivers can be easily added in a plug-and-play manner.

Chi Zhang, Siddharth Kumar, and Dinesh Bharadia

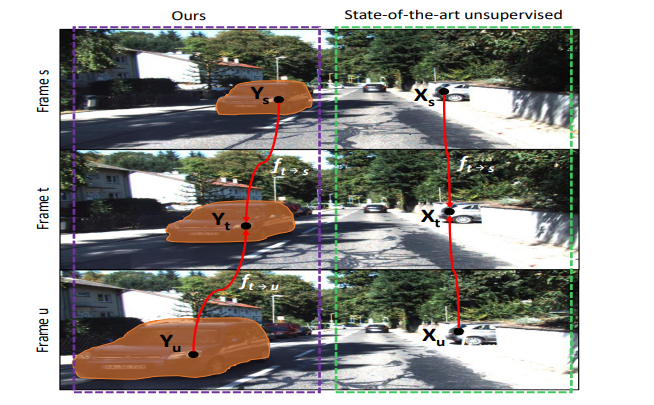

Unsupervised learning for geometric perception (depth, optical flow, etc.) is of great interest to autonomous systems. Recent works on unsupervised learning have made considerable progress on perceiving geometry; however, they usually ignore the coherence of objects and perform poorly under scenarios with dark and noisy environments. In contrast, supervised learning algorithms, which are robust, require large labeled geometric dataset. This paper introduces SIGNet, a novel framework that provides robust geometry perception without requiring geometrically informative labels. Specifically, SIGNet integrates semantic information to make depth and flow predictions consistent with objects and robust to low lighting conditions. SIGNet is shown to improve upon the state-of-the-art unsupervised learning for depth prediction by 30% (in squared relative error). In particular, SIGNet improves the dynamic object class performance by 39% in depth prediction and 29% in flow prediction. Our code will be made available online

Yue Meng, Yongxi Lu, Aman Raj, Samuel Sunarjo, Rui Guo, Tara Javidi, Gaurav Bansal, Dinesh Bharadia

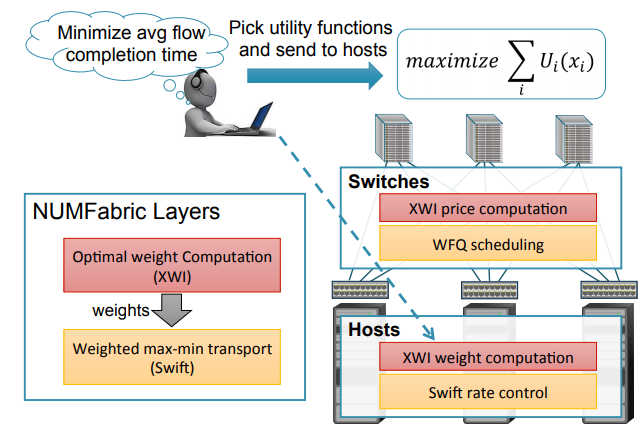

Kanthi Nagaraj, Dinesh Bharadia, Hongzi Mao, Sandeep Chinchali, Mohammad Alizadeh, Sachin Katti